30th November 2022 was quite a big day: Microsoft-backed OpenAI released ChatGPT, an AI ‘chat bot’ which is… significantly more powerful than the 2000-era chat bots we are used to.

Someone built a virtual machine inside ChatGPT. Others used it to write short movies, code for their job, essays for school and college, and a whole lot more.

While some of these uses are positive, using ChatGPT for essays is arguably plagiarism (since ChatGPT is kind of like a massive content scraping tool: it ‘reads’ other people’s words from around the internet, then ‘mashes them up’ into a somewhat cohesive essay). At a minimum, it goes against the spirit of academia.

After all, this is how easy it can generate an answer for a GCSE English assignment, even when it’s explicitly told that it is for a school assignment:

This will naturally become quite problematic for teachers and lecturers, along with harming student’s life prospects in the long run.

But… I’m not a teacher, nor a student. So why should I care?

Well, I run a digital media business and sometimes I hire other writers for some of the articles. If these writers used AI content tools to write the articles, it can have a number of negative impacts on my business:

- While Google sort of says that AI content is fine to use, they also caution that mass scale/spammy content is not okay. Some people interpret this position to mean that you can freely use AI content, but I would argue that publishing loads of barely-edited AI content will harm people’s websites in the long run.

- These AI content tools often provide “facts” that are just plain wrong.

- I generally dislike the idea that I am paying good rates to writers who might then ‘cheat’ and use tools that generate incorrect and/or unhelpful articles in minutes.

I actually recently received AI content from an outsourced writer, who I hired on a reputable writing platform called Writer Access. This writer was not someone I regularly use; I was merely testing them out. I placed two orders with them for 1,500-2,000 word articles – articles which should take 1-2 hours each.

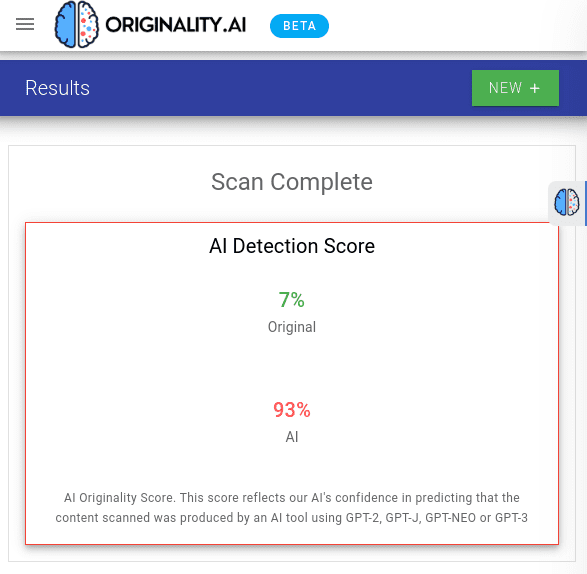

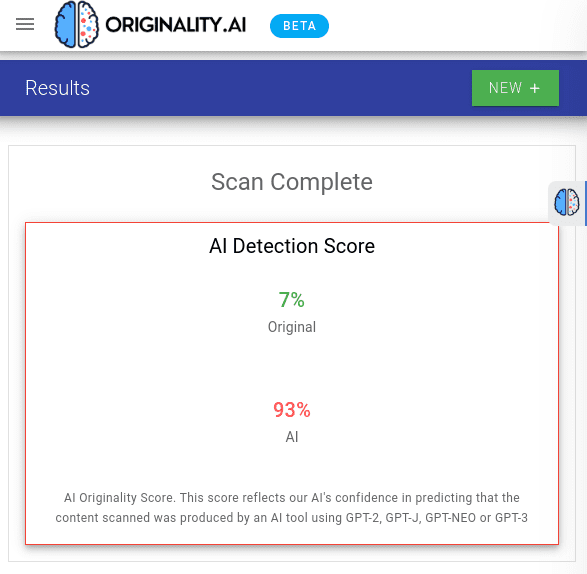

In less than an hour, the writer delivered both articles back to me. That got my alarm bells ringing, so I used a few AI content detection tools and sure enough, the writer had just used an AI writing tool:

At this point it’s clear that it is possible to detect AI/GPT content, so let’s explore the available tools for this.

10 Tools That (Claim To) Detect AI or GPT Content

There are currently various tools (that I am aware of) for detecting GPT written content:

- GPTZero

- OpenAPI’s AI Classifier

- Originality.ai

- HuggingFace OpenAI Detector

- Writer.com’s AI Content Detector

- Content At Scale’s AI Detector

- GLTR

- CrossPlag

- SEO.ai’s Detector

- ZeroGPT

While none of these tools are perfect, most of these tools can be accurate – plus they are being regularly improved. So taken together, I think that they can help to turn the tide against AI content.

GPTZero

At the start of January 2023, Edward Tian announced a new anti-AI content tool: GPTZero. This tool is free to use, and after loading (it might take 5-10 seconds), you just paste your content in the box. It will then start to scan it, a process which can take 30-60 seconds.

It gives a bunch of information about the text while it’s going:

At the end of the process, you can click “Get GPTZero Result” and it will show the result as a score – along with a helpful ‘explainer’ that says whether the text is more likely to be AI or human generated.

I really like this tool: it uses an interesting technical approach, and it seems the most accurate that I have tested so far.

OpenAI’s AI Classifier

At the end of January 2023, OpenAI (the company who created ChatGPT) released their own free AI Classifier tool. Naturally because this is from the creator of ChatGPT, it is a pretty big development in the fightback against AI content. Unfortunately their blog post says that it currently only identifies 26% of AI-written content.

This begs the question though: do the other tools also miss 74% of AI-written content, or is OpenAI’s tool behind the others? Unfortunately that answer isn’t obvious, but it’s still worth checking out OpenAI’s tool because it did correctly catch some AI content that I tested against it:

Originality.ai

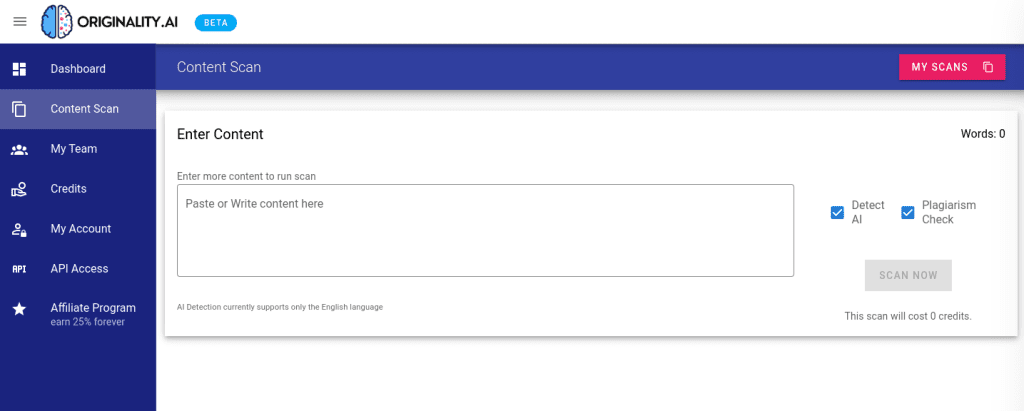

Originality.ai was launched in December 2022, and it’s a paid-for tool (it’s 1 credit per 100 words, and each credit is 1 cent – meaning that scanning a 2,000 word article will cost 20 cents). After signing up and buying pay-as-you-go credits, you enter content into the tool:

You can also scan for plagiarism with this tool, although this uses more credits. It is fairly quick, and it gives the results clearly:

I have found this tool to be decently reliable (as have other reviewers), although it sometimes says that my own content contains 10-20% AI content – even though I write every word myself.

So I wouldn’t want to test a single article (or essay) from someone and conclude that they are ‘cheating’ and using AI tools. But if multiple articles/essays from someone flag up AI content, and the other tools do too, then you can be reasonably confident that they are using AI content tools.

HuggingFace

The title of the HuggingFace AI Detector webpage says “GPT-2 Output Detector”, and this tells us that while it will work with older AI tools (like Jasper), it probably won’t work with ‘GPT-2.5’ or GPT-3 tools like OpenAI’s ChatGPT.

While that’s a bit of a pity, it still detects some AI content from our earlier ChatGPT murder-mystery story:

Plus there might be a time when ChatGPT is a paid-for tool, meaning that people will go back to tools like Jasper – whose output is more reliably detected with this tool.

Writer.com

This tool is from a company (writer.com) that helps you write AI content as part of their paid-for services, and the tool is actually designed to show you when your AI content looks too suspicious, so that you can edit it further (to fool AI detection tools).

Whatever its motives, it’s still another useful tool to have in the fightback against AI content. From my testing, it doesn’t detect GPT-3 (i.e. OpenGPT) content as AI – it keeps saying it is human content.

But like HuggingFace’s tool, AI generated content from slightly older tools will still get detected:

One other downside of the tool is that it has a maximum word limit of 350 words, which isn’t all that much. But there’s nothing stopping you from copying and pasting multiple times (i.e. each chunk of an article or essay), at least.

Content At Scale

Content At Scale are similar to writer.com (they are an AI-powered content service), but their AI detection tool seems better to me. I tested it with older (GPT-2) AI content, along with the murder-mystery story from ChatGPT, and it detected high levels of AI content on both:

GLTR

This free tool is a bit more technical in nature – it doesn’t simply say “this is AI content”, or “this is clearly human written”. The GLTR webpage actually has instructions on its use, before you click through to the live demo tool.

In general, though, the more green you have in the results, the more likely it is to be AI generated content. And when considering this rule of thumb, it worked decently well with various AI texts that I tried out:

However my suspicion is that this tool would be easy to fool (by light human editing), plus the more powerful that AI content tools get, the less effective this tool will get.

CrossPlag

CrossPlag is a feature-rich plagiarism detection tool that also has an AI content detection tool. From my testing, it correctly identified a range of AI content – including from ChatGPT. It then correctly said that my own content was human generated.

The tool doesn’t have a really low character/word limit unlike some other tools (which is a nice benefit), however it’s worth noting that the tool stops you using it after just 3 tries. You then need to register for an account to use it more.

Nonetheless, this seems a fairly good AI content detector.

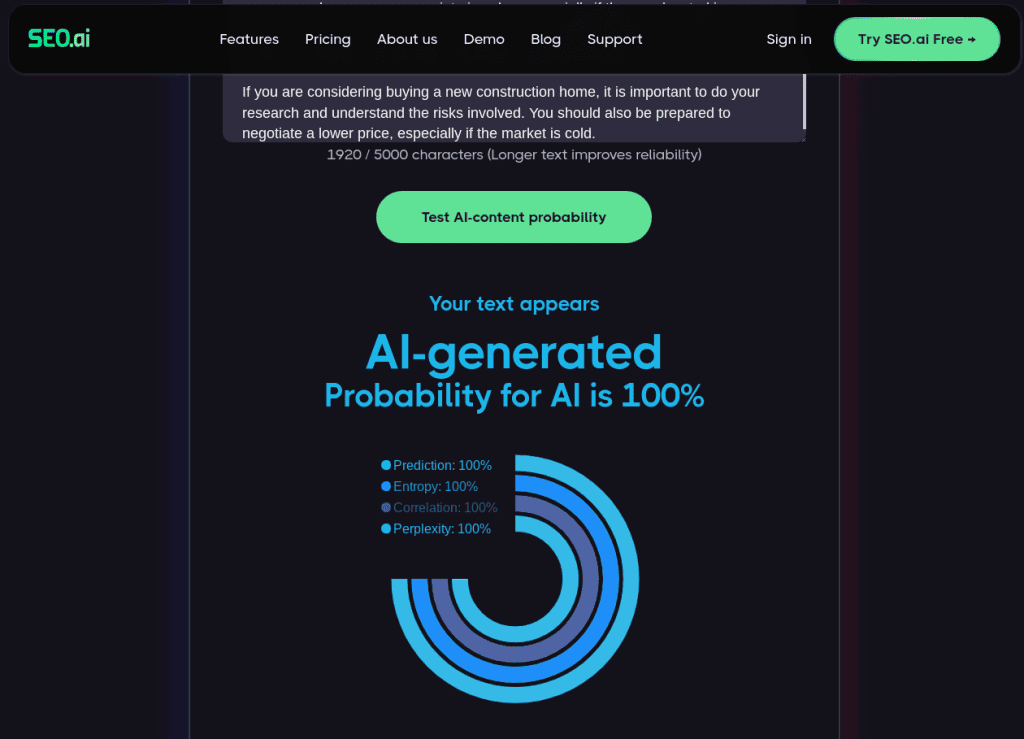

SEO.Ai’s Detector

SEO.ai offers a free AI detector on their website which supports up to 5000 characters (approx 1000 words) and it seems pretty accurate to me. I tested it out on older GPT-2 and GPT-3 content and it detected it easily – along with highlighting the entropy, correlation, prediction and sentence perplexity which is neat:

I also tested the tool with GPT-4 and it still detected the content. Finally, I generated content (using the same prompt) in Bard and it again had no issues detecting that the content was AI generated:

So while SEO.ai is an AI content generation tool first and foremost, its detector is still accurate and helpful for detecting AI content.

ZeroGPT

Not to be confused with GPTZero, ZeroGPT is a simple to use AI content detector that claims to detect GPT-4 and it also supports up to 15,000 characters – quite a lot more than many of the other tools I’ve covered. Heck, this should allow many content creators and teachers to paste in an entire article/essay.

It seems to work pretty well from my testing, easily picking up GPT-3/3.5 content – plus it highlights the exact areas where it thinks AI content was used:

I also tested it out on a longer GPT-4 generated story, and the results were also great (picking up that it was pretty much all AI generated):

So overall ZeroGPT seems like a nice – and accurate – tool to use.

A Final Note Of Caution

While these AI content detection tools seem pretty good overall, they are not 100% perfect. Plus there are tools like Grammarly and Google Docs/Mail that now use AI in their auto suggestion and writing tools. These are not AI content writing tools, but they could potentially give off some AI content signatures that trip up some of these tools.

So as I touched on earlier, I wouldn’t want to just use a single tool to test a single article/essay – and then conclude that the writer is cheating and using AI content tools. But if you test multiple articles/essays against a few of these tools, you can be more sure that AI content tools have been used.